SynDRA-BBox

Synthetic Dataset for Railway Applications with Bounding Boxes

SynDRA-BBox

SynDRA-BBox is an extension of the original SynDRA dataset, specifically designed for 2D/3D object detection in railway environments. While the original SynDRA dataset focuses on image-level data captured from simulated railway scenarios, SynDRA-BBox introduces precise 2D and 3D bounding box annotations for a diverse set of railway objects, including trains, pedestrians, and natural obstacles.

Key features:

- Fully annotated 3D point clouds using simulated LiDAR data, aligned with RGB images.

- 2D and 3D bounding boxes for major object categories relevant to railway operation and safety.

- Maintains the visual diversity and domain fidelity of the original SynDRA environments, including urban, rural, and station scenes.

The dataset is intended for benchmarking tasks such as:

- 2D/3D object detection

- Sensor fusion (RGB + LiDAR)

- Semi-supervised and unsupervised domain adaptation

- Synthetic-to-real transfer learning

A public version of the SynDRA-BBox dataset, as used in our experiments, is released to support reproducibility. This version includes only point clouds for cars and pedestrians, corresponding exactly to the data used in the paper, and can be found at the following link:

Syndra-BBox v0.

In addition, we release an extended and updated version of the dataset, which includes the full set of annotated classes, improved collision shapes for both vehicles and pedestrians, and realistic noise added to the point clouds. This enhanced version is intended to support further research and development beyond the scope of the published paper. You can download the full dataset, which includes 72 sequences plus 2 bonus sequences from multi-sensor systems with corresponding annotations, or opt to download individual scenarios. Each scenario consists of 8 distinct sequences, with each sequence focused on a specific object of interest: car, truck, bus, crossing pedestrian, parallel pedestrian, tree with leaves, tree without leaves, and rocks. These objects either cross the tracks, run parallel to the railway, or lie over the rail in the path of the train. To ensure visual diversity, every sequence within a scenario features a different combination of textures, randomly selected from a common pool of 10, for the ballast, sleepers, terrain, and crossing platform. These textures are consistent across all scenarios but are uniquely sampled for each sequence. We use 5 different car models and various pedestrian models sourced from Epic Games' City Sample. The bus model remains the same across all sequences. Conversely, trees and rocks are uniquely varied across scenarios, with no repetitions. Additionally, each sequence includes dynamic elements such as moving vehicles and pedestrians on nearby roads, which are placed in different positions per sequence. The placement of natural objects like forest elements along the railway is also randomized for each sequence to further enrich the environmental variability. MEGA Download Links for SynDRA-BBox:

- SynDRA-BBox v1: The whole dataset, ~1.8TB of data.

- Utils: Sensor Specs, and Python Scripts: from .bin files to png.

SynDRA-BBox was accepted for publication at the IEEE International Conference on Intelligent Rail Transportation (IEEE ICIRT 2025).

Waiting for the conference proceedings, if you use SynDRA-BBox in your research, please cite the following pre-print:

@article{diaz2025towards,

title={Towards Railway Domain Adaptation for LiDAR-based 3D Detection: Road-to-Rail and Sim-to-Real via SynDRA-BBox},

author={Diaz, Xavier and D'Amico, Gianluca and Dominguez-Sanchez, Raul and Nesti, Federico and Ronecker, Max and Buttazzo, Giorgio},

journal={arXiv preprint arXiv:2507.16413},

year={2025}

}

SynDRA-BBox was developed together in a close technical collaboration with Xavier Diaz, Raul Dominguez, and Max Ronecker during their time at SETLabs Research GmbH (Munich, Germany), who provided the adaptation methods and conducted the experiments based on the SynDRA-BBox.

The Dataset

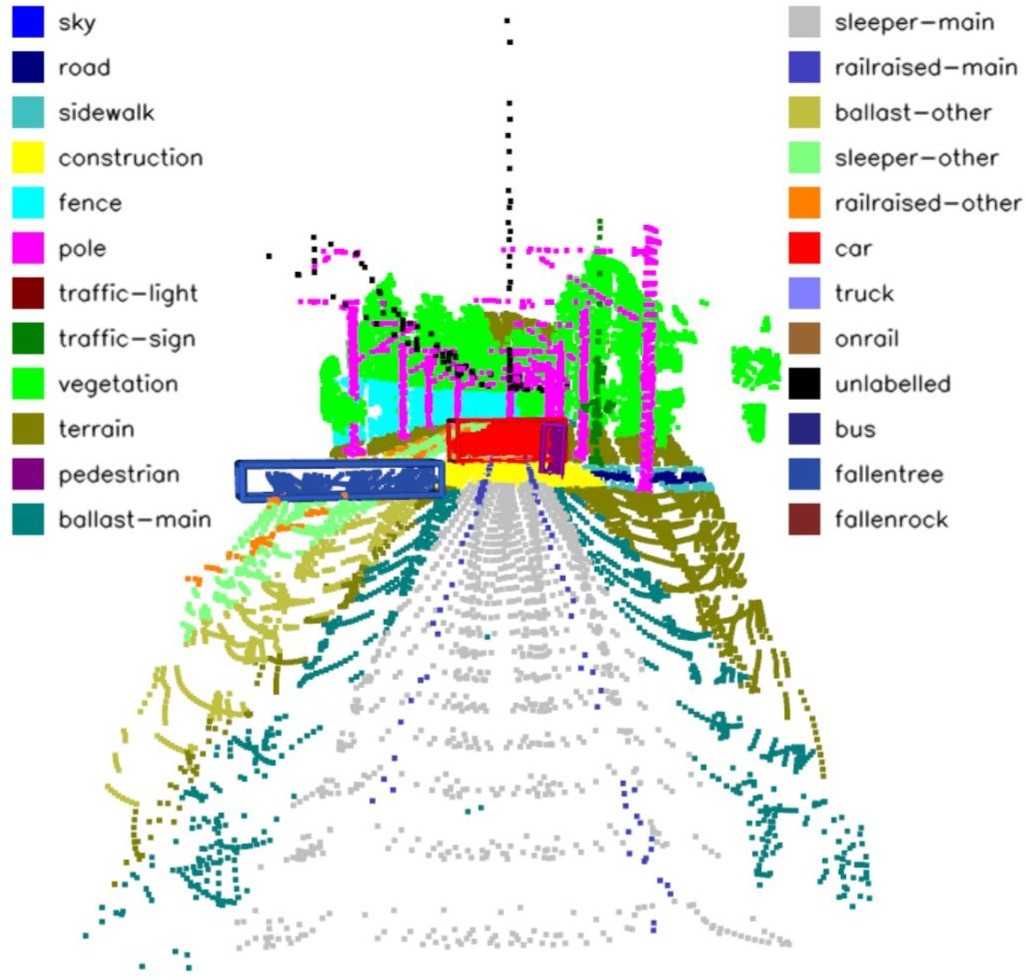

2D/3D Semantic Segmentation and Bounding Boxes Example

Scenario Examples

Sensor Specs - SynDRA-BBox

RGB Camera 0

- Resolution: 2464x1600

- FOV: 30°

- Height from rails: 3.5 m

- Frame Rate: 10 FPS

Stereo RGB Cameras 1/2

- Resolution: 2464x1600

- FOV: 90°

- Baseline: 0.6 m

- Height from rails: 3.5 m

- Frame Rate: 10 FPS

NotRepLidar_0 (Tele-15)

- Non-repeating pattern

- Range: 500 m

- FOV: 15°

- Height: 2.5 m

- Points/scan: 4800

- Rate: 10 Hz

BeamStackLidar_0 (HDL-64E)

- 64-beam scanning pattern

- Range: 120 m

- Horizontal FOV: 180°

- Vertical FOV: 26.8°

- Height: 2.5 m

- Rate: 10 Hz

Annotations

- 2D/3D bounding boxes

- Semantic segmentation (pixel and point-level)

- Depth (up to 650m)

- Calibration and poses

Dataset Structure - SynDRA-BBox

The dataset is organized as follows:

SynDRA-BBox/

├── Scenario_0/

│ ├── Bus_0/

│ │ ├── Sunny/

│ │ │ ├── DepthCamera_0/

│ │ │ │ ├── Bin_folder/

│ │ │ │ │ ├── SceBus_0_sun_mor_000000.bin

│ │ │ │ │ ├── SceBus_0_sun_mor_000001.bin

│ │ │ │ │ └── ...

│ │ │ │ ├── Poses/

│ │ │ │ └── Times/

│ │ │ ├── DepthCamera_1/

│ │ │ ├── DepthCamera_2/

│ │ │ ├── RGBCamera_0/

│ │ │ ├── RGBCamera_1/

│ │ │ ├── RGBCamera_2/

│ │ │ ├── SSCamera_0/

│ │ │ ├── SSCamera_1/

│ │ │ ├── SSCamera_2/

│ │ │ ├── NotRepLidar_0/

│ │ │ └── BeamStackLidar_0/

│ │ ├── CameraBBoxes_0.txt

│ │ ├── CameraBBoxes_1.txt

│ │ ├── CameraBBoxes_2.txt

│ │ ├── LidarBBoxesBeamsStackLidar_0.txt

│ │ ├── LidarBBoxesNotRepLidar_0.txt

│ │ ├── rail_tumlike_poses.txt

│ │ └── LidarBBoxesNotRepLidar_0.txt

│ ├── Car_0/

│ ├── Crossing_Pedestrian_0/

│ ├── Parallel_Pedestrian_0/

│ ├── Rock_0/

│ ├── Tree_noLeaf_0/

│ ├── Tree_Leaf_0/

│ └── Truck_0/

├── Scenario_1/

├── Scenario_2/

├── Scenario_3/

├── Scenario_4/

├── Scenario_5/

├── Scenario_6/

├── Scenario_7/

├── Scenario_8/

│ ├── Crossing_Pedestrian_8/

│ └── Parallel_Pedestrian_8/

├── Utils/

│ ├── bin_to_png.py

│ ├── bin_to_pcd.py

│ ├── README.md

│ ├── requirements.txt

│ └── Sensor_Specs.txt

└── README.md